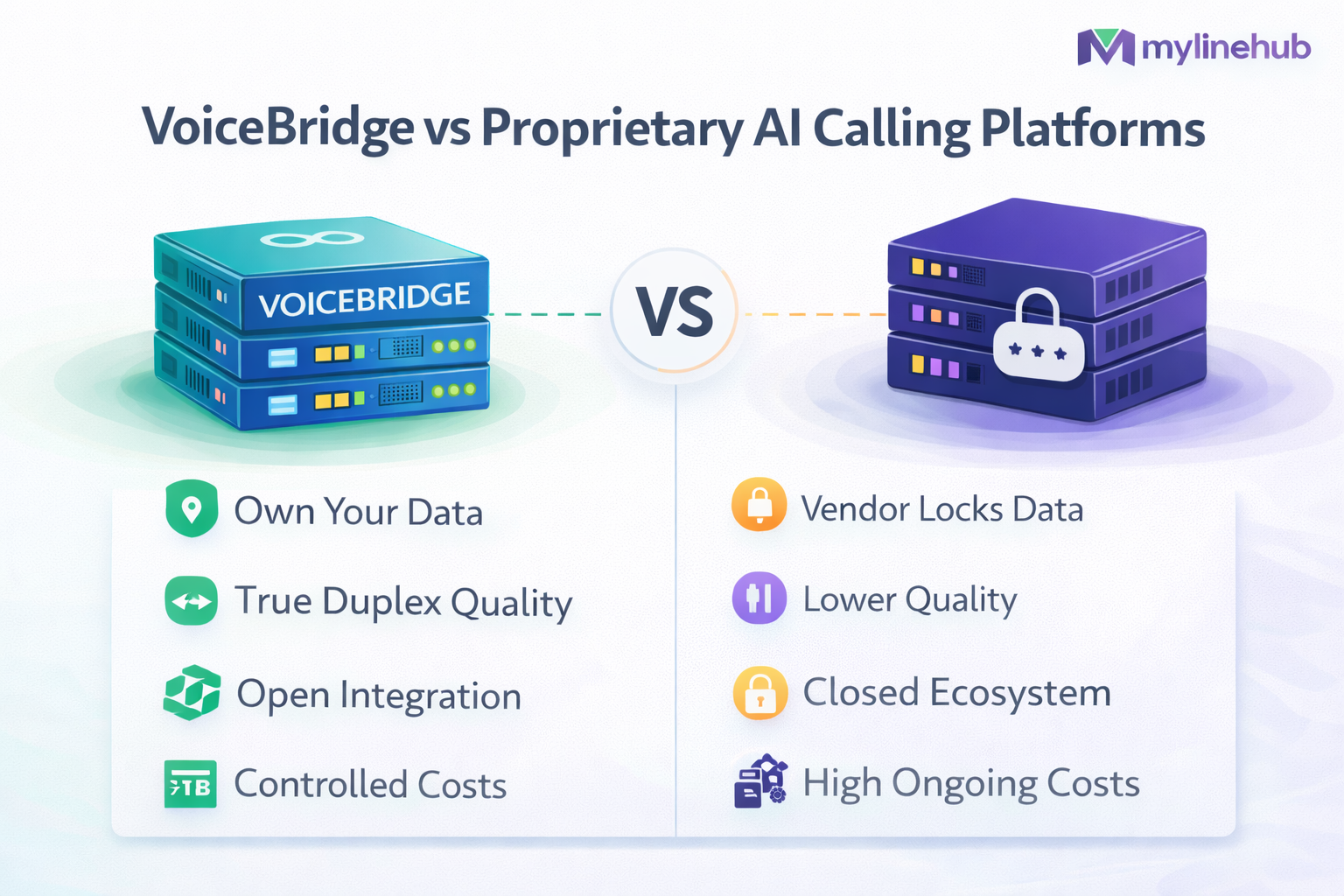

VoiceBridge vs Proprietary AI Calling Platforms

Compare VoiceBridge with proprietary AI calling platforms across data ownership, duplex quality, integration depth, and long-term costs.

VoiceBridge vs Proprietary AI Calling Platforms

“AI calling platform” can mean very different things: some vendors are basically a cloud dialer with an LLM bolted on, others are a closed CPaaS + bot runtime, and a few are full-stack “voice agent” products that hide telephony and media details completely.

MYLINEHUB VoiceBridge takes a different path: it is an open-source, RTP-correct, production full-duplex bridge designed to sit next to your existing Asterisk/FreePBX and connect it to real-time AI (STT/TTS/LLM) without changing your PBX internals.

This is not a “features list” comparison. It’s an architecture and operations comparison: data ownership, media quality, barge-in correctness, debuggability, latency control, scale patterns, and long-term cost.

VoiceBridge repository:

https://github.com/mylinehub/omnichannel-crm/tree/main/mylinehub-voicebridge

Canonical architecture reference:

https://mylinehub.com/articles/mylinehub-voicebridge-architecture

What Proprietary AI Calling Platforms Usually Are

Most proprietary AI calling platforms bundle three layers into a single closed system:

- Telephony entry: SIP trunks, DID management, outbound dialing, caller ID, compliance templates

- Media layer: audio capture, audio injection, jitter buffering, recording, sometimes WebRTC

- AI layer: STT, LLM orchestration, tools/actions, TTS, conversation memory

The advantage: fast time-to-demo. The cost: you inherit an opaque system where the most important telecom problems (RTP timing, NAT, jitter, barge-in) are hard to verify and harder to fix when something breaks.

What VoiceBridge Is (In Concrete Engineering Terms)

VoiceBridge is a Java service that connects to Asterisk/FreePBX using ARI and builds a duplex media graph that:

- captures caller audio via RTP

- streams to AI in real-time

- injects AI speech back as RTP with correct headers, timestamps, pacing

- supports interruption (barge-in) by truncating bot output immediately

Where the key “production guarantees” live in the repository

-

ARI media graph (bridges + external media):

src/main/java/com/mylinehub/voicebridge/ari/impl/AriBridgeImpl.java,src/main/java/com/mylinehub/voicebridge/ari/impl/ExternalMediaManagerImpl.java -

RTP correctness (packetizing, NAT symmetry, port planning):

src/main/java/com/mylinehub/voicebridge/rtp/RtpPacketizer.java,src/main/java/com/mylinehub/voicebridge/rtp/RtpSymmetricEndpoint.java,src/main/java/com/mylinehub/voicebridge/rtp/RtpPortAllocator.java -

Session lifecycle (per-call state and cleanup):

src/main/java/com/mylinehub/voicebridge/session/CallSession.java,src/main/java/com/mylinehub/voicebridge/session/CallSessionManager.java -

Realtime AI + barge-in truncation:

src/main/java/com/mylinehub/voicebridge/ai/RealtimeAiClient.java,src/main/java/com/mylinehub/voicebridge/ai/impl/RealtimeAiClientImpl.java,src/main/java/com/mylinehub/voicebridge/ai/impl/OpenAiRealtimeTruncateManager.java -

Deployment/container baseline:

docker/Dockerfile,docker-compose.yml,.env.example,src/main/resources/application.properties

The Most Important Question: Who Owns the Media Plane?

In voice AI, the “media plane” is where production issues live: not in the prompt, not in the LLM — in the audio pipeline.

Proprietary platforms

- You do not control RTP pacing rules

- You cannot easily confirm whether audio is truly duplex on-wire

- You often cannot debug with Wireshark at the correct boundary

- You rely on vendor support for “why does it stutter at peak hours?”

VoiceBridge

- You control the RTP pipeline (packetization, timestamps, sequencing)

- You can inspect call health deterministically and reproduce issues

- You can enforce strict NAT-safe behavior (symmetric RTP learning)

- You can tune and scale your media workers without changing the PBX

In VoiceBridge, RTP is not a side effect — it is a first-class engineered subsystem, mainly inside:

rtp/RtpPacketizer.java and rtp/RtpSymmetricEndpoint.java.

Latency Reality: Average Latency Is Not the Problem, Tail Latency Is

A call feels “human” when the system avoids long spikes. Vendors often report average latency; real users experience tail latency: the occasional 500–1200ms stalls that break turn-taking and make barge-in fail.

Where proprietary platforms usually lose

- shared multitenant media clusters under unpredictable load

- hidden buffering policies (great in a demo, laggy under stress)

- fixed STT/TTS pipelines with limited ability to tune packet pacing

How VoiceBridge is designed to win

- RTP clock isolation: AI may be bursty, RTP must stay steady. That discipline is implemented where packets are emitted (packetizer + scheduling).

-

Immediate truncation: when caller interrupts, bot output is stopped fast, not “when buffer drains”.

Truncation logic is a real component:

ai/impl/OpenAiRealtimeTruncateManager.java. - Local adjacency: you can deploy VoiceBridge close to Asterisk to remove WAN jitter from PBX-to-bridge. Proprietary platforms typically force PBX-to-cloud over the internet (or force you to move trunks).

Full Duplex and Barge-In: The Feature That Separates “IVR AI” from “Conversational AI”

Most “AI calling” systems are still turn-based in practice. They may capture audio continuously, but they cannot stop speaking naturally when the customer interrupts.

What happens in many proprietary systems

- caller talks over bot

- bot keeps playing because audio is already buffered

- conversation becomes unnatural and frustrating

What VoiceBridge does differently

- caller audio is continuously streamed in

- bot audio is continuously streamed out

- interruption triggers a “cut-through” policy that stops bot output immediately

This is a session-level, media-level decision, not a prompt trick:

that is why VoiceBridge models calls explicitly via

session/CallSession.java and session/CallSessionManager.java,

and why truncation exists as a real component.

Integration Depth: PBX Control vs PBX Replacement

Many proprietary platforms succeed by replacing your PBX workflow: “bring your numbers here, use our dialer UI, use our recording.”

That can be fine for greenfield startups. It is painful for companies with: FreePBX dialplans, queues, time conditions, ring groups, call routing policies, compliance rules, and support operations.

VoiceBridge integration strategy

- Keep Asterisk/FreePBX as the system of record for telephony

- Use ARI to control and bridge media without rewriting PBX internals

- Inject AI where it belongs: as a media participant, not as the PBX owner

ARI integration is implemented in the ARI layer:

ari/impl/AriBridgeImpl.java and

ari/impl/ExternalMediaManagerImpl.java.

Debuggability: The Hidden Cost of “Managed” Platforms

Debugging voice issues is a different discipline than debugging web apps. You need to answer questions like:

- Did Asterisk send RTP to the correct IP:port?

- Did SSRC change mid-call?

- Are timestamps monotonic and paced correctly?

- Is NAT rewriting happening on the path?

- Is the bot sending bursts instead of 20ms frames?

Proprietary platform debugging (common reality)

- support ticket + “we’ll check logs”

- partial metrics without packet-level truth

- limited ability to reproduce the exact conditions

VoiceBridge debugging (what you can do)

- Packet-level verification with Wireshark on your own infrastructure

- Correlate sessions with your own logs and call IDs

- Inspect the RTP engine behavior and tune it if needed

Practical RTP handling and correctness is concentrated in:

rtp/RtpPacketizer.java,

rtp/RtpSymmetricEndpoint.java,

and call lifecycle code ensures cleanup and traceability.

Security and Compliance: “Where Does My Audio Go?”

The highest-risk asset in AI calling is not the prompt — it’s: call audio, transcripts, and the customer’s PII.

Proprietary platforms (typical tradeoffs)

- audio leaves your network by default

- recordings and transcripts often live in vendor storage

- compliance and retention are vendor-specific, sometimes inflexible

- your incident response depends on vendor policies and timelines

VoiceBridge (what changes)

- you control where VoiceBridge runs (cloud, on-prem, hybrid)

- you control firewall boundaries between Asterisk, VoiceBridge, and AI endpoints

- you choose how/where to store recordings and transcripts

VoiceBridge’s deployment patterns and environment controls are meant to be explicit and auditable:

src/main/resources/application.properties,

.env.example,

docker-compose.yml.

Scaling and Cost: Vendor “Per Minute” vs Infrastructure Economics

Proprietary platforms frequently price by: per minute + per call + STT/TTS + “agent” fees + storage + compliance add-ons. That can be reasonable at low volume, and painful at scale.

VoiceBridge scaling model

VoiceBridge scales like a normal service: more calls → more media workers. The PBX remains your PBX; VoiceBridge is the duplex media bridge layer.

- Run multiple VoiceBridge instances behind a load balancer

- Pin RTP sessions (do not “spray” UDP packets across nodes)

- Use deterministic port ranges and firewall rules per node

- Use containerization for repeatable deployments

Deterministic media port planning is why a dedicated allocator exists:

rtp/RtpPortAllocator.java.

Important honesty

Open-source does not magically remove costs. It changes the cost profile from “vendor margin + lock-in” to “your infra + your ops”. For many serious telecom teams, that is a win because it is controllable and optimizable.

Lock-In: The Strategic Risk Most Teams Underestimate

Vendor lock-in in AI calling is not only API lock-in. It is:

- media lock-in: you cannot migrate your conversation logic without rebuilding the audio layer

- data lock-in: transcripts, recordings, evaluation metrics live in vendor formats

- workflow lock-in: routing, compliance, reporting become vendor-specific

VoiceBridge reduces lock-in because:

- it keeps the PBX as your telephony core

- it keeps the media integration open and inspectable

- it lets you swap AI providers (or run on-prem AI) without changing telephony

Side-by-Side Summary

| Dimension | Proprietary AI Calling Platform | MYLINEHUB VoiceBridge |

|---|---|---|

| Deployment | Vendor cloud (sometimes hybrid) | Your servers (on-prem/cloud/hybrid), container-friendly |

| PBX Integration | Often PBX replacement or trunk migration | Works with Asterisk/FreePBX via ARI without rewriting PBX logic |

| Duplex + Barge-in | Often limited by buffering / platform policy | Engineered duplex with explicit truncation control |

| RTP Debuggability | Opaque | Inspectable; RTP correctness is in code you control |

| Data Ownership | Often vendor storage by default | You decide where audio/transcripts live |

| Cost Model | Per-minute + add-ons | Infra economics + chosen AI provider costs |

| Lock-in | High (media + data + workflow) | Lower (open bridge layer, PBX retained) |

How to Decide (A Practical Rule)

Choose a proprietary platform if:

- you need a demo immediately and you can accept vendor dependency

- your telephony workflows are simple or you’re willing to migrate them

- you don’t need deep control over duplex, timing, and packet-level debugging

Choose VoiceBridge if:

- you need real conversational duplex with reliable barge-in

- you run serious call volume and need predictable scaling economics

- you must keep data and recordings under your policy control

- you already rely on FreePBX/Asterisk logic and do not want PBX replacement

Reference: “Where to Look in the VoiceBridge Codebase”

If you want to validate that VoiceBridge is a real engineered media bridge (not a demo script), these are the most relevant areas:

-

ARI bridge/media graph:

src/main/java/com/mylinehub/voicebridge/ari/impl/AriBridgeImpl.java,src/main/java/com/mylinehub/voicebridge/ari/impl/ExternalMediaManagerImpl.java -

RTP engine:

src/main/java/com/mylinehub/voicebridge/rtp/RtpPacketizer.java,src/main/java/com/mylinehub/voicebridge/rtp/RtpSymmetricEndpoint.java,src/main/java/com/mylinehub/voicebridge/rtp/RtpPortAllocator.java -

Per-call lifecycle:

src/main/java/com/mylinehub/voicebridge/session/CallSession.java,src/main/java/com/mylinehub/voicebridge/session/CallSessionManager.java -

Streaming AI + truncation:

src/main/java/com/mylinehub/voicebridge/ai/impl/RealtimeAiClientImpl.java,src/main/java/com/mylinehub/voicebridge/ai/impl/OpenAiRealtimeTruncateManager.java -

Deployment baseline:

docker/Dockerfile,docker-compose.yml,src/main/resources/application.properties

Repo: https://github.com/mylinehub/omnichannel-crm/tree/main/mylinehub-voicebridge

Want to see API-driven CRM + Telecom workflows in action? Try the WhatsApp bot or explore the demos.

Comments (0)

Be the first to comment.